Technological Framework: Difference between revisions

No edit summary |

Added discussion reference |

||

| (7 intermediate revisions by 2 users not shown) | |||

| Line 1: | Line 1: | ||

Article by '''Dr Lukasz Porwol''' & '''Dr Abdul Wahid''' | <blockquote>You can join the discussion! Click on '''Discussion''' at the top to open the board!</blockquote>Article by '''Dr Lukasz Porwol''' & '''Dr Abdul Wahid''' | ||

== TECHNOLOGICAL FRAMEWORK FOR WEB 4.0 & VIRTUAL WORLDS – OVERARCHING VIEW == | |||

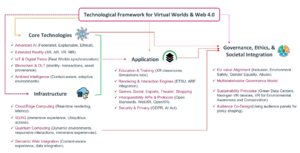

This section presents a comprehensive overview of the technological building blocks fundamental to the development and advancement of Web 4.0 and virtual worlds. It outlines an integrated, multilayered technological framework designed to support intelligent, immersive, secure, and inclusive digital environments. By examining each layer from foundational technologies to infrastructure, middleware services, diverse application domains, and governance and ethics it provides an essential context for the interplay between technological innovation and societal impact. Understanding this overarching framework is crucial for policymakers, developers, businesses, and other stakeholders to strategically navigate and influence the evolution of virtual ecosystems. The framework serves not only as a reference architecture but also as a roadmap for informed, responsible, and sustainable adoption and development of technologies underpinning Europe's position as a leader on Web 4.0. | |||

The technological framework for Web 4.0 and virtual worlds encompasses a complex ecosystem of interconnected layers, each contributing to the creation of intelligent, immersive, and secure digital environments. At the foundation are core technologies such as artificial intelligence, blockchain, and quantum computing, which provide the computational power and algorithmic sophistication necessary for advanced virtual experiences. Building on this, the infrastructure layer incorporates elements such as 5G networks, edge computing, and cloud services, enabling seamless connectivity and data processing across diverse devices and platforms. Middleware services act as a bridge, facilitating communication between the underlying infrastructure and user-facing applications while also providing essential functionalities such as identity management, data interoperability, and security protocols. The application layer showcases the diverse domains where Web 4.0 and virtual world technologies find practical implementation, ranging from immersive entertainment and social interaction to industrial simulations and educational experiences. This layer demonstrates the transformative potential of these technologies across various sectors of society and the economy. Overarching all these technical components is the governance and ethics layer, which addresses crucial aspects such as data privacy, digital rights, and ethical considerations in the development and use of virtual world technologies. This comprehensive framework not only provides a blueprint for technological development but also emphasises the importance of responsible innovation, ensuring that the advancement of Web 4.0 and virtual worlds aligns with societal values and contributes positively to human progress. | |||

[[File:TechFrameworkPicture1.png|thumb|'''Figure 1''': High-Level Technological Framework for Web 4.0 and Virtual Worlds. This diagram illustrates the foundational components and critical enablers underpinning the emerging ecosystem of Web 4.0 and virtual worlds. At the core lies the Web 4.0 paradigm, characterised by deeply immersive, intelligent, and interoperable digital environments—surrounded by five key pillars. ]] | |||

===Core Technologies Layer=== | |||

The Core Technologies Layer constitutes the fundamental building blocks of the Web 4.0 ecosystem, encompassing the essential technologies necessary to develop intelligent, immersive, and interactive virtual worlds. This foundational layer integrates Artificial Intelligence (AI), Extended Reality (XR), the Internet of Things (IoT) combined with digital twins, Blockchain and Distributed Ledger Technologies (DLTs), and Ambient Intelligence. Together, these technologies significantly enhance functionality, interactivity, and user experience within virtual worlds. | |||

*'''Artificial Intelligence''' forms a central pillar within this ecosystem, enabling advanced automation, personalisation, and dynamic adaptability of virtual content and interactions. Spanning from specialised task-driven models to sophisticated generative AI and powerful Large Language Models (LLMs), AI empowers virtual worlds with capabilities such as dynamic content generation, intelligent non-player characters (NPCs), and contextually responsive environments. Furthermore, approaches such as federated learning facilitate decentralised data processing, significantly enhancing user privacy and adhering to privacy-by-design principles. Additionally, Explainable AI (XAI) plays a critical role in ensuring transparency, accountability, and compliance, thereby building essential user trust in AI-driven decisions. | |||

*'''Extended Reality''' (XR) comprising Augmented Reality (AR), Virtual Reality (VR), Mixed Reality (MR), and broader XR technologies provides immersive, multisensory user experiences. By seamlessly blending physical and digital realities, XR technologies enable rich spatial interactions, heightened presence, and enhanced sensory feedback, effectively serving as the user-facing interface of Web 4.0 environments. | |||

*'''The convergence of IoT and digital twins''' bridges physical and virtual domains. Networks of sensors and smart devices continuously gather and feed real-time data into virtual worlds, creating synchronised digital replicas of physical entities, ranging from individual structures and city-wide infrastructures to complex industrial systems or even biological systems. These digital twins facilitate simulations, continuous real-time monitoring, predictive maintenance, and advanced analytics across multiple sectors, including healthcare, smart manufacturing, robotics, urban management, and more. | |||

*'''Blockchain and Distributed Ledger Technologies (DLTs)''' underpin crucial aspects of transparency, trust, and decentralisation within virtual worlds. Technologies such as Non-Fungible Tokens (NFTs) enable secure, verifiable ownership and exchange of digital assets. Additionally, smart contracts automate and enforce decentralised governance mechanisms, transaction management, and regulatory compliance, supporting transparent and autonomous operations within Web 4.0 applications. | |||

*'''Ambient Intelligence''' synergistically combines AI, IoT, and pervasive computing to establish context-aware, responsive digital environments. Through continuous, adaptive sensing of user contexts and environmental conditions, Ambient Intelligence dynamically tailors virtual interactions to user-specific needs, preferences, and behaviors. This adaptive capability significantly enhances user experiences, making virtual environments intuitive, seamless, and deeply integrated into everyday activities. | |||

Collectively, these core technologies define the functional and experiential capabilities of virtual worlds, establishing the robust foundation upon which the broader Web 4.0 ecosystem is built. | |||

===Infrastructure Layer=== | |||

*'''The Infrastructure Layer''' provides the essential computational and networking backbone necessary for scalable, secure, and real-time virtual world experiences. This foundational layer integrates cloud and edge computing, advanced telecommunications networks (5G and 6G), quantum computing, and semantic knowledge graphs, collectively enabling sophisticated interactions, high-performance processing, and seamless user experiences. | |||

*'''Cloud and edge''' computing constitute critical components of this infrastructure, supporting the rendering of high-fidelity virtual content and enabling real-time interactivity within immersive environments. Edge computing enhances responsiveness by processing data closer to the end-user, significantly reducing latency and optimising performance for XR applications. Complementing edge computing, cloud computing delivers highly scalable and elastic resources, capable of accommodating the substantial computational demands of large-scale virtual simulations, complex AI model training, and extensive data analytics tasks. | |||

*'''Advanced telecommunications''' networks, notably 5G and forthcoming 6G technologies, form the connectivity foundation essential for delivering mobile and seamless Extended Reality (XR) experiences. 5G networks already provide ultra-low latency and high data throughput capabilities, indispensable for ensuring synchronised, high-quality user interactions within virtual worlds. Looking ahead, the emergence of 6G will further enhance these capabilities by embedding network intelligence and AI-driven functionalities directly into telecommunications infrastructure, enabling unprecedented innovations such as real-time collaborative XR, immersive holographic streaming, and more sophisticated context-aware communication environments. | |||

*'''Quantum computing''', though currently in its nascent stage, represents a significant technological advancement poised to transform the computational landscape of virtual worlds. By leveraging quantum principles, quantum computing promises breakthroughs in solving intricate optimisation challenges, enhancing cryptographic security protocols, and dramatically accelerating complex simulations. As quantum technology matures, it is anticipated to become a critical asset for managing highly processing-intensive applications and scenarios inherent in sophisticated virtual environments. | |||

*'''Semantic knowledge graphs''' offer structured methods for organising and interpreting vast quantities of interconnected data. They enhance contextual search, facilitate precise relationship mapping, and foster seamless interoperability across diverse platforms and domains. When integrated with artificial intelligence, semantic knowledge graphs power more intuitive, contextually relevant user interactions and knowledge-driven personalisation, significantly enriching the overall user experience within virtual worlds. | |||

Collectively, the technologies within the Infrastructure Layer profoundly influence the capabilities and evolution of virtual worlds. Their integration supports continuous innovation, expands human-computer interaction possibilities, and establishes a robust foundation for the future growth of immersive digital ecosystems. | |||

===Core Middleware & Services Layer=== | |||

The Core Middleware and Services Layer operates as a critical intermediary between the foundational infrastructure and the user-facing applications of Web 4.0 ecosystems. It provides essential functionalities including interoperability solutions, robust identity management, real-time rendering engines, and comprehensive privacy and security safeguards, effectively bridging technological capabilities with user needs. | |||

A significant component of this layer is *'''Decentralised Digital Identity''', particularly through Self-Sovereign Identity (SSI) systems. These systems leverage blockchain and distributed ledger technologies to provide users with secure, verifiable, and portable digital identity credentials. By avoiding centralised identity repositories, SSI enhances user privacy, autonomy, and data security, empowering users to seamlessly authenticate and manage identities across various virtual worlds and digital services. | |||

*'''Real-time rendering and interaction engines''' such as Unity, Unreal Engine, and Godot constitute another crucial aspect of this middleware layer. These engines support high-quality graphics rendering, physics simulations, and complex interactions, essential for immersive Extended Reality (XR) environments. Additionally, standardised frameworks such as the ETSI ARF (Augmented Reality Framework) provide modular blueprints for efficiently authoring, capturing, managing, and delivering rich digital content and interactive scenes, further promoting consistency and reusability across virtual world platforms. | |||

To facilitate seamless integration and interoperability, this middleware layer employs standardised *'''APIs and protocols.''' Web technologies such as WebRTC (real-time communications), WebXR (browser-based XR experiences), and OpenXR (cross-platform XR integration) enable consistent user experiences across different hardware devices and software ecosystems. Complementing these standards, efficient 3D asset formats such as glTF (GL Transmission Format) and USD (Universal Scene Description) simplify content management, promote interoperability, and ensure efficient asset reuse within diverse virtual environments. | |||

*'''Embedded security and privacy frameworks''' within this middleware layer are essential for protecting user data, communications, and transactional integrity. These frameworks incorporate advanced mechanisms like end-to-end encryption, zero-knowledge proofs, rigorous access control systems, and comprehensive audit trails. Such measures not only assure compliance with data protection regulations such as the General Data Protection Regulation (GDPR) but also proactively address emerging regulatory requirements around artificial intelligence and data privacy. Collectively, these security provisions ensure the creation of trusted, secure, and privacy-respecting virtual world experiences, fostering user confidence and adoption. | |||

Together, the elements of the Core Middleware and Services Layer establish a robust foundation that effectively integrates technological infrastructure with immersive, secure, and user-centric virtual environments. | |||

===Application Domain Layer=== | |||

The Application Domains Layer translates the advanced capabilities provided by foundational technologies and middleware into tangible real-world impact. It addresses critical sectors, including education, healthcare, smart cities, smart manufacturing and robotics, entertainment, and governance, demonstrating the profound potential of virtual worlds and related technologies to reshape society and enhance human experiences. | |||

Within the education and training sector, virtual worlds foster immersive learning environments through interactive 3D content, virtual classrooms, and realistic simulated laboratories. These innovations significantly enhance learner engagement, improve knowledge retention, and facilitate experiential learning at scale, making educational experiences more accessible, dynamic, and personalised. In healthcare, the application of digital twins creates precise, virtual representations of individual patients, enabling advanced diagnostics, personalised treatment planning, and effective remote monitoring. Additionally, the integration of virtual and augmented reality therapies significantly advances mental health care, physical rehabilitation, and surgical training. These immersive technologies allow medical practitioners and patients to engage in realistic and controlled therapeutic scenarios, thereby improving treatment outcomes and patient care experiences. For smart cities, the synergy between digital twins and the Internet of Things (IoT) optimises urban planning, infrastructure management, traffic flow, and citizen engagement. Initiatives such as the European CitiVerse highlight how virtual environments can effectively visualise, simulate, and enhance civic decision-making processes, fostering greater citizen participation, transparency, and efficient resource allocation. The sector of smart manufacturing and robotics benefits considerably from digital twin technology and IoT integration. Detailed virtual replicas of production assets, manufacturing lines, and entire factories enable precise real-time monitoring, predictive maintenance, and operational optimisation. Furthermore, the combination of robotics with digital twins facilitates detailed performance analysis, enhances collaborative human-robot interactions, and allows for effective automation planning. Collectively, these technologies significantly improve operational efficiency, productivity, safety standards, and informed decision-making within industrial settings. The entertainment and cultural sectors leverage virtual environments to revolutionise user engagement through virtual concerts, interactive museums, and digital festivals. Creative economies thrive by harnessing blockchain-enabled technologies such as NFTs, which empower artists and content creators to monetise and securely distribute their digital works across diverse virtual platforms, creating sustainable economic opportunities. Finally, the domain of governance and public services experiences transformative potential through virtual participation platforms, extended reality-based e-government tools, and sophisticated policy simulation environments. These advancements promote transparency, accessibility, inclusivity, and responsiveness within public administration, significantly enhancing citizen-government interactions and fostering democratic engagement. Additionally, the integration of large language models (LLMs) with extended reality demonstrates substantial promise for creating deeply personalised, inclusive, and engaging user experiences. Through advanced prompt engineering and model fine-tuning, these technologies enable highly customised interactions, further enriching education, training, and various interactive scenarios by combining immersive virtual environments with responsive, intelligent AI-driven interactions. | |||

==Governance, Ethics, and Societal Integration Layer== | |||

The Governance, Ethics, and Societal Integration Layer ensures that the technological advancements underpinning Web 4.0 and virtual worlds remain closely aligned with core societal values such as human rights, democratic principles, and environmental sustainability. This layer defines the essential socio-technical frameworks guiding the ethical and inclusive evolution of virtual ecosystems, addressing critical issues around digital rights, participation, governance, and environmental responsibility. | |||

Central to this layer is the European Union's commitment to Value Alignment, prioritising digital rights protection, data sovereignty, child safety, and anti-discrimination measures. These ethical principles form a foundational pillar of Web 4.0, ensuring that technology serves diverse societal needs and promotes equitable participation. Accessibility and inclusion are emphasised, enabling diverse demographics to actively engage with virtual worlds, thus reducing digital divides and fostering broad societal integration. | |||

To facilitate effective and representative governance, the adoption of multistakeholder models is critical. These governance structures integrate perspectives from governments, civil society, academia, industry, and individual users, thereby promoting collaborative policymaking and diverse representation. Approaches such as Citizens' Panels, participatory design workshops, and inclusive policy dialogues empower stakeholders from varied backgrounds to actively shape platform norms, standards, and regulatory frameworks. Such inclusive governance practices ensure that technological developments reflect collective societal values, addressing diverse needs and promoting democratic accountability. | |||

The layer also addresses environmental sustainability as a core principle guiding technological advancement. Recognising the environmental implications of digital infrastructure, sustainability initiatives—including green computing practices, energy-efficient data centers, and the development of recyclable XR hardware—are integrated into responsible innovation strategies. Lifecycle assessments and explicit commitments to carbon neutrality become essential components of platform policy, reflecting an ongoing responsibility towards minimising ecological footprints and promoting long-term sustainability. | |||

Lastly, Citizen Co-Design initiatives represent a crucial mechanism for fostering user empowerment and civic engagement within virtual environments. Programs such as the EU's Citizen Toolbox educate and actively involve citizens in platform governance and design processes. Through these participatory methods, users gain greater ownership, trust, and civic responsibility, enabling them to shape platforms that reflect their collective needs and aspirations. Moreover, as technology assessment evolves, active citizen participation and stakeholder involvement increasingly define the research, science funding, and innovation landscape, breaking disciplinary silos and fostering accountable, deliberative approaches to technology governance. | |||

Collectively, these governance, ethics, and societal integration practices ensure that virtual worlds evolve as inclusive, ethical, and sustainable spaces, deeply rooted in democratic values, societal well-being, and environmental stewardship. | |||

==Emerging Innovations== | |||

Several forward-looking innovations are anticipated to significantly influence the evolution of Web 4.0 and virtual worlds, driving their continued advancement toward an integrated, inclusive, and immersive Metaverse. One key innovation is Neurosymbolic AI, which combines the capabilities of neural networks and symbolic reasoning. This hybrid approach equips AI systems with an enhanced ability to understand complex semantic structures and perform sophisticated reasoning tasks. Neurosymbolic AI significantly improves the explainability and contextual intelligence of AI-driven interactions, enabling richer, more transparent, and intuitive experiences within virtual environments. Additionally, the development of RAG-based Digital Assistants (Retrieval-Augmented Generation) offers highly personalised AI assistance by integrating real-time knowledge graphs and extensive document corpora. Such assistants deliver contextually relevant, precise, and tailored responses, thereby enriching user experiences and fostering deeper engagement through meaningful virtual interactions. The integration of Federated Learning into virtual environments represents another transformative trend. By facilitating collaborative AI model training across decentralised data sources, Federated Learning protects user privacy, reduces reliance on centralised data repositories, and enhances the accuracy of AI-driven services. This decentralised training method empowers robust, privacy-respecting AI capabilities essential for scalable, secure, and personalised virtual worlds. The adoption of Policy-as-Code translates governance rules and regulatory frameworks into executable software instructions. This innovation enables real-time monitoring, automated enforcement, and continuous compliance within virtual platforms. Policy-as-Code ensures transparent, responsive, and accountable governance, significantly simplifying regulatory adherence and enhancing user trust within increasingly complex digital ecosystems. To enable users to move seamlessly across various virtual worlds and metaverse platforms, Cross-Domain Interoperability Protocols are vital. These protocols facilitate the portability of digital identities, avatars, virtual goods, and other digital assets, empowering users to maintain their persistent virtual presence and possessions while navigating diverse immersive environments. Interoperability significantly enhances user freedom, accessibility, and flexibility, paving the way for a unified and interconnected Metaverse. Moreover, XR Accessibility Guidelines are essential for inclusive virtual environments. By establishing comprehensive standards and best practices for accessibility, these guidelines ensure virtual experiences are welcoming and usable for individuals with diverse physical, sensory, and cognitive capabilities. They cover aspects such as visual, auditory, motor accessibility, assistive technology integration, and alternative interaction methods, making immersive worlds accessible and equitable for all users. Addressing user engagement and comfort, Cognitive Load Management techniques mitigate information overload and cognitive fatigue, particularly relevant within complex virtual environments. Effective cognitive load management enhances user productivity, sustained engagement, and overall user satisfaction by optimising the delivery and presentation of virtual information. | |||

Ultimately, the transition from isolated virtual environments to a fully integrated Metaverse hinges upon significant advancements across immersive realism, ubiquitous access and identity, interoperability, and scalability. Continuous innovations in 3D content creation, advanced rendering technologies, and spatial audio contribute directly to achieving high-fidelity and realistic virtual experiences. Enhanced Augmented Reality (AR) and Virtual Reality (VR) hardware, combined with ubiquitous high-bandwidth connectivity, enable users to effortlessly access and interact with immersive environments from diverse physical locations. Robust interoperability standards and scalable solutions, such as edge computing and distributed architectures, are critical for accommodating increasing user numbers and complexity of interactions. Together, these innovations support the evolution of a Metaverse that is scalable, open, interconnected, and human-centric, laying the foundation for future digital interaction and collaboration. | |||

==Summary== | |||

This unified framework establishes a robust, scalable, and ethically grounded foundation for the development and expansion of virtual worlds within the era of Web 4.0. By integrating technological sophistication with social and political awareness, the framework provides an adaptable blueprint for researchers, developers, policymakers, and stakeholders. It positions Europe, along with like-minded global ecosystems, at the forefront of creating open, inclusive, and human-centered virtual environments. Ultimately, the continued advancement across immersive realism, ubiquitous access, interoperability, and scalability will accelerate responsible innovation, profoundly shaping the future of digital interactions, collaboration, and societal integration within the emerging Metaverse. | |||

[[File:TechFrameworkPicture2.png|thumb|'''Figure 2''': Technological Framework for Virtual Worlds and Web 4.0. This multilayered framework outlines the foundational components for next-generation virtual environments. It encompasses enabling technologies such as artificial intelligence, blockchain, and spatial computing, as well as supporting infrastructure including cloud, edge, and 5G networks. The framework spans application domains ranging from the virtual worlds to digital twins, all underpinned by governance principles aligned with European Union values of privacy, security, and ethics.]] | |||

==XR Interfaces and User Interaction== | |||

The Interfaces section examines how users access, interact with, and engage with virtual worlds. This encompasses the hardware and software that bridge the human senses, inputs, and actions to the digital environment, including XR devices and various input/output modalities. While Core Technologies define the fundamental types of experiences that are technologically possible, Interfaces determine how the user experiences and controls these virtual environments. Effective interface design is crucial for ensuring natural, intuitive, and immersive interactions within virtual worlds, ultimately shaping the overall user experience. The choice and implementation of interface technologies can greatly impact the usability, accessibility, and sense of presence felt by users navigating these digital spaces. The success of virtual reality applications depends heavily on effectively interfacing users with the virtual environment to support desired activities. VR interfaces should consider human factors to facilitate effective interaction within virtual environments. Behavioural Software Assistance helps users employ VR interfaces efficiently with techniques such as restricting object movement to aid motor skills. | |||

Virtual interfaces are essential for facilitating user interaction and immersion within virtual worlds. These interfaces encompass various technologies that enable users to engage with and manipulate the virtual environment, including haptic devices, gesture recognition systems, and brain-computer interfaces. The design of virtual interfaces plays a critical role in determining the intuitiveness, accessibility, and overall user experience of virtual world applications, as naturalistic interactions contribute to a heightened sense of presence and engagement. Therefore, the development of innovative virtual interfaces is vital for unlocking the full potential of virtual worlds and enabling new forms of human-computer interaction. User interaction within virtual environments should be intuitive, seamless, and responsive to user actions, leveraging multimodal feedback mechanisms to enhance the sense of presence and immersion. | |||

===Core Interface Technologies and Examples=== | |||

XR devices constitute the primary means by which users visualise and interact with virtual worlds, offering varying degrees of immersion and presence. Head-mounted Virtual Reality displays provide immersive visual experiences by projecting stereoscopic images directly in front of the user's eyes, while Augmented Reality and Mixed Reality devices overlay digital content onto the real world, blending virtual and physical elements. These displays are accompanied by controllers, which enable users to manipulate objects, navigate environments, and interact with other users within virtual worlds. | |||

The core XR hardware devices include: | |||

*'''VR and MR Headsets:''' Valve Index, Sony PlayStation VR2 (VR) and Meta Oculus Quest 3 and Apple Vision Pro (MR). These offer stereoscopic 3D visuals and spatial audio, often with inside-out tracking, fully immersing the user's vision in the virtual environment or through passhtrough cameras (MR) overlay virtual objects over reality. | |||

*'''AR Glasses:''' Microsoft HoloLens 2, Magic Leap or XREAL and upcoming consumer AR glasses. These have see-through displays overlay holograms onto the user's view of the real world and can also map the environment to anchor virtual objects on real surfaces. | |||

*'''Mobile Devices:''' Smartphones and tablets, while less immersive than wearables, serve as common AR interface devices via their touchscreens and cameras, providing an entry point to AR for many users. | |||

*'''CAVE systems and 3D Displays:''' In some cases, VR is experienced through room-sized setups or holographic displays, though these are less common and primarily used in industrial or research settings. | |||

To participate in virtual worlds, users require input and control devices beyond just visual and auditory displays. These include: | |||

*'''Motion Controllers:''' Handheld controllers with buttons, triggers, and joysticks, along with motion tracking, allow users to point, grab, and manipulate virtual objects, often with 6DoF tracking. | |||

*'''Hand Tracking and Gestures:''' Newer systems use cameras to track users' hands directly, enabling gesture-based interfaces without the need for controllers, allowing more natural and intuitive interactions. | |||

*'''Eye Tracking:''' Many advanced headsets (Quest Pro) have inward-facing sensors to track the user's gaze, which can be used as an input and for techniques like foveated rendering. | |||

*'''Voice Commands as Natural Language Input:''' Speech recognition allows users to issue commands, dictate text, or have conversations with virtual agents. | |||

Haptic feedback systems, such as gloves, vests, and other devices, provide tactile sensations, force feedback, and vibrotactile feedback to simulate touch and physical interactions in virtual worlds. Beyond visual and auditory stimuli, the user experience can be further enhanced by incorporating other sensory modalities: | |||

Haptic technology allows users to feel virtual objects and textures, enhancing the sense of presence and realism. This tactile feedback greatly improves immersion, going beyond just visual and auditory outputs. Haptic controllers and gloves offer simple vibration-based feedback, while more advanced systems apply force or vibration to simulate the shape and texture of virtual objects. Haptic suits and wearables provide feedback across the body, enabling users to feel virtual sensations like rain or the impact of an object. Experimental interfaces, such as smell devices and thermal feedback, illustrate the push towards multi-sensory immersion. | |||

Additionally, advanced interface technologies, like brain-computer interfaces, are emerging. BCI uses neural signals as input, allowing users to potentially trigger actions in the virtual world simply by thinking about them. Telepresence robotics also blurs the line between virtual and physical, enabling users in VR to control remote physical robots and feel as if they are present in another location. | |||

The table below provides examples of various XR interface technologies and their purposes in virtual worlds. | |||

{| class="wikitable" | |||

|+ Examples of XR Interface Technologies | |||

|- | |||

! Interface Type !! Examples !! Purpose in Virtual Worlds | |||

|- | |||

| VR and MR Headset (HMD) || Valve Index, PS VR2, Meta Quest 3 , Apple Vision Pro|| Immersive visual & audio display for full virtual environments (blocking out reality). Users "enter" the virtual world via the headset. MR headsets offer AR capabilities thanks to passthrough cameras. | |||

|- | |||

| AR Glasses || Microsoft HoloLens 2, Magic Leap, XREAL || Transparent or pass-through display to overlay holograms on real surroundings. Enables seeing virtual objects in real space (industrial guides, gaming, navigation). | |||

|- | |||

| Motion Controllers || Oculus Touch controllers, HTC Vive wands || Handheld devices with tracking and buttons that allow users to point, grab, and interact with virtual objects with precise motion mapping. | |||

|- | |||

| Hand & Eye Tracking || Meta Quest Pro Eye Tracking, Ultraleap Leap Motion Controller, Tobii eye tracker (built-in to Varjo headsets) || Camera-based tracking of hands and eyes enabling gesture control and gaze interaction. This removes the need for physical controllers and makes interaction more natural (pointing with a finger, selecting by looking). | |||

|- | |||

| Haptic Feedback || bHaptics TactGlove (gloves), Teslasuit (full body suit), Controller rumble motors || Provides tactile sensations (vibrations, pressure, texture simulation) to the user. Increases immersion by letting users "feel" virtual events – e.g. a rumble when firing a weapon or a touch sensation when a virtual character taps your shoulder. | |||

|- | |||

| Voice Interface || Voice commands (built-in mic on headsets), AI assistants like Alexa/Siri integration in VR || Allows spoken interaction with the virtual world or virtual agents. Useful for issuing commands (especially in AR when hands may be busy) or conversing with NPCs. Modern AI can enable dynamic conversations with virtual characters. | |||

|- | |||

| Brain-Computer Interface || EEG headbands (e.g. NextMind, OpenBCI, ArcTop), Neurable headphones|| Experimental tech where brain signals (or other neural indicators) control aspects of the experience. For instance, focusing intently could be mapped to telekinetically moving an object in VR. Still rudimentary but a potential future interface for hands-free control. | |||

|} | |||

===Standards for Immersive Interfaces=== | |||

Ensuring seamless cross-platform compatibility for XR devices and methods requires the adoption of crucial standards. One key standard is OpenXR, an open-source and royalty-free API standard managed by the Khronos Group. OpenXR provides a universal interface for VR and AR hardware, enabling developers to write code once and deploy it across different headsets without the need for platform-specific modifications. As described, "OpenXR is an open, royalty-free standard for access to virtual reality and augmented reality platforms and devices. This standard promotes interoperability, allowing applications to be deployed across a wide range of VR and AR platforms, and it helps streamline development while ensuring a consistent user experience across various XR devices and systems. Another important standard is WebXR, which brings VR and AR experiences to the web. WebXR enables developers to create immersive web-based applications that can run in a browser without requiring additional plugins or software installations. It provides a set of JavaScript APIs that allow web developers to access VR and AR hardware features, such as head tracking, motion tracking, and display output, directly from a web page. This makes it easier to deploy XR experiences to a broader audience, as users can access them simply by visiting a website in a WebXR-compatible browser. XR interaction poses unique challenges. Current standardisation efforts by 3GPP are underway to address Quality of Experience requirements for XR over 5G and beyond, focusing on aspects like low latency and high reliability. Other standards, like those being developed around spatial audio and haptics, also help ensure a consistent and high-quality experience regardless of the hardware or software used. Standard file formats, like glTF for 3D models, are also essential for easily transferring assets between different VR/AR tools. The convergence of virtual reality, augmented reality, and mixed reality technologies, collectively known as extended reality, has unlocked novel avenues for interaction, collaboration, and immersion in virtual worlds. XR interfaces go beyond conventional screens and keyboards to deliver experiences that engage multiple senses, offering new ways to interact with computers and digital content. The development of auditable XR systems is critical, especially as these technologies become more integrated into sensitive sectors. This requires careful consideration of both hardware and software components, as well as a thorough understanding of user experience and security implications. | |||

===Relationships and dependencies=== | |||

The Interfaces domain is closely intertwined with Core Technologies and Tools & Development. For instance, when developing a VR application in Unity, a programmer utilises the OpenXR API or platform-specific SDKs to support a given headset. Furthermore, Interfaces have implications for Data & AI – for example, eye tracking data represents a form of user data that AI might analyse to enable adaptive interfaces, and voice interfaces rely on AI for speech recognition and natural language processing. From the Participation perspective, accessible interfaces determine the level of user engagement; for instance, more affordable mobile-based VR or AR solutions allow a broader range of consumers and prosumers to access virtual worlds, whereas expensive or complex interfaces may limit participation to technology enthusiasts. Consequently, the democratisation of interface technologies is crucial for the widespread adoption of Web 4.0. The evolution of interfaces in XR is not merely about technological advancement but also involves understanding and incorporating human factors, ethical considerations, and standardisation to ensure a seamless, secure, and accessible experience for all users. This convergence of elements ensures that as we advance toward Web 4.0, the interfaces that bridge our physical and digital realities are as intuitive and inclusive as possible. | |||

===Emerging Trends in Interfaces=== | |||

We are observing a trend toward convergence of interface devices. Emerging technologies feature lighter form factors, such as AR glasses with a more natural appearance, as a significant area of development. Haptic interfaces are advancing to provide force feedback and richer sensory experiences, while brain-computer interfaces may become more feasible for niche applications within the next 5-10 years. Furthermore, interfaces are anticipated to become increasingly pervasive, exemplified by AR-enabled car windshields or smart home devices projecting virtual interfaces into the user's environment. The goal is to make the interface technology seamless, allowing users to experience it as a natural extension of their own senses and body within the virtual realm. The development of novel interfaces for virtual worlds, including virtual reality and augmented reality, is crucial for creating immersive and intuitive user experiences. One notable development is the rise of natural user interfaces that use touch, vision, and voice to interact with devices, revolutionising how we engage with computing and communication technologies. The human-computer interfaces are becoming increasingly prevalent, incorporating speech as one of the primary interaction modes, which necessitates advanced features like dialogue planning and execution to support effective human-computer dialogue. The continuous evolution of human-computer interaction has led to virtual reality for training, offering cost-effective means for skills development across various applications. Recent advancements in display technologies and artificial intelligence have also resulted in the adaptation of device interface navigation, depending on the user's behaviour. User-centred design remains a key factor in human-computer interaction. The synergy of technological and methodological progress has contributed to redefining the requirements for effective and desirable human–computer interaction. | |||

==Tools and Development (Engines, Platforms and Standards)== | |||

===Role of Tools & Development in Virtual Worlds=== | |||

The Tools & Development domain encompasses the software tools, engines, and development platforms used to construct virtual world content, as well as the standards and protocols that ensure different systems and content can interoperate effectively. If we consider a virtual world as a complex system, these tools and blueprints are what creators require to build that system. This domain is the driving force behind the entire ecosystem: without robust tools and agreed-upon standards, it would be tremendously challenging to produce the rich, interoperable experiences envisioned for Web 4.0. Standards and protocols ensure that the various components of Web 4.0 can communicate and work together effectively. For instance, standards like glTF for 3D models or protocols like WebRTC for real-time communication are essential for creating interoperable experiences. | |||

Interoperability and Standards | |||

Interoperability and standardisation are pivotal to the success of virtual environments. The absence of these foundational elements would lead to segregated and incompatible virtual environments, thereby impeding the fluid interchange of users, data, and assets across diverse platforms. This underscores the critical importance of establishing uniform standards and protocols to foster a cohesive and interconnected metaverse. In the context of virtual reality, interoperability facilitates the seamless integration of diverse virtual reality applications, enabling users to effortlessly transition between varied virtual environments without encountering compatibility hurdles or the necessity for distinct hardware or software configurations. By adhering to standardised protocols, developers can ensure that their applications are accessible across a wide array of virtual reality platforms, thus expanding their potential user base and streamlining the process of content dissemination. Moreover, standardisation encourages innovation and collaboration within the virtual reality sector. Game engines have become the foundational tools for constructing interactive 3D virtual environments, and they are equally essential for the metaverse. These engines offer a comprehensive suite of capabilities, encompassing content creation, physics simulation, networking, and rendering functionalities. Two prominent game engines in this domain are Unity and Unreal Engine. Both are powerful platforms leveraged for developing virtual and augmented reality experiences. They provide a full range of features requisite for creating virtual environments, including physics simulation, rendering, and networking. Unity has gained widespread popularity in the AR/VR space due to its user-friendly interface and sizable community, which facilitates accessibility for novice developers and enables cross-platform deployment. Conversely, Unreal Engine is renowned for its superior graphical fidelity and is often the preferred choice when high-quality visuals or complex simulations are required. Importantly, both engines now offer extensive support for XR platforms. Other important engines include: Godot Engine: Godot Engine is an open-source game engine that is garnering increasing adoption, offering support for extended reality functionalities. It is a lightweight and cost-free option without any royalties, which makes it an appealing choice for independent developers. NVIDIA Omniverse: NVIDIA Omniverse stands as a robust platform meticulously designed for 3D design collaboration and the construction of metaverse applications. It furnishes a real-time 3D graphics rendering engine and supports various industry-standard formats, thereby facilitating seamless integration with other design tools. This platform is particularly well-suited for projects that entail intricate 3D models or necessitate real-time collaboration among distributed teams. Web Engines/Frameworks: For web-based virtual environments, software frameworks such as Three.js, Babylon.js, and A-Frame facilitate the development of 3D scenes that can be rendered within web browsers. Additionally, platforms like Playcanvas also focus on enabling the web-based delivery of virtual content. Platform-Specific SDKs: Platforms like Roblox or Second Life have their own creation tools (e.g. Roblox Studio) that enable users to build within those ecosystems. Similarly, modding tools for existing game engines (like Bethesda's Creation Kit for Skyrim, etc.) can be considered part of how virtual content is made, though they're platform-specific. 3D Modeling and Content Creation Tools: These are used to create the assets (3D models, textures, animations, sounds) that populate virtual worlds. Blender: A popular open-source 3D modeling and animation suite. Prosumers and professionals alike use Blender to create everything from characters to environments. It supports exporting to standard formats (glTF, FBX, etc.) which can then be imported into game engines. | |||

o Autodesk Maya / 3ds Max: Industry-standard tools for high-quality modeling, animation, and rendering. Used heavily in game development and film, and also for metaverse content creation. They integrate via plugins to engines (e.g. Unreal has datasmith exporter for Max). Adobe Creative Suite: Tools like Photoshop (for textures/UI), Illustrator (for vector graphics), Substance Painter (for advanced texturing/PBR materials) are widely used. Adobe also is involved in 3D with tools like Adobe Medium (VR sculpting) or Adobe Aero (simple AR creation). Quill / Tilt Brush / VR Creation Tools: These are unique tools that allow creating 3D art from within VR – e.g. Tilt Brush lets you paint in 3D space, and Quill (by Smoothstep, originally by Oculus) lets you animate in VR. They represent new paradigms of content creation suited to the medium. World Building Platforms: Some specialised tools focus on assembling full virtual worlds without heavy coding for example, Epic's Fortnite Creative or Core allow users to build game worlds with intuitive editors. There are also scene editors in platforms like Somnium Space, VRChat (with Udon scripting), etc., empowering prosumers to develop content. | |||

Collaboration and Deployment Tools: Version Control & Collaboration: Just like traditional software, large virtual world projects use Git or Perforce to manage changes to content/code by teams of creators. New tools like Unity Collab or Unreal Multi-User Editing are tailored for 3D content collaboration. Cloud Deployment: Tools to deploy and host servers for virtual worlds (like mirror networking frameworks, multiplayer server orchestration such as Improbable's SpatialOS or Unity's Multiplay) are crucial to get experiences online and scalable. Containerisation (Docker/Kubernetes) is also used for scaling instances of worlds. DevOps for Metaverse: The concept of continuous deployment and update is extending to virtual worlds – e.g. pushing content updates or live events. Platforms might have "live ops" tools to schedule events, A/B test features in-world, etc. | |||

Key Standards and Protocols | |||

3D Content Standards: glTF (GL Transmission Format): An open standard from Khronos for 3D models and scenes optimised for runtime delivery. glTF is often called the "JPEG of 3D" because it is a compact, efficient format for transmitting 3D assets . It supports meshes, materials, animations, etc., in a JSON (or binary .glb) structure. It's widely supported: engines like Godot prefer glTF, and Adobe, Blender, and others have built-in glTF export. Using glTF means a model created in one tool can easily be imported into any compliant engine or platform, which is essential for interoperability in Web 4.0. USD (Universal Scene Description): Originally by Pixar, now open source, USD is a powerful 3D scene format that can describe very complex scenes with layers and variations. It's heavier than glTF and aimed at content creation pipelines (think of USD as analogous to Photoshop's PSD, whereas glTF is like JPEG ). USD is becoming a standard for interchange between content creation tools and high-end platforms (e.g. NVIDIA's Omniverse uses USD as its lingua franca). In the metaverse context, USD could allow different tools to collaborate on one large scene (one person working in Maya, another in Blender, merging into a USD world). Then glTF would be used to publish a performant version of that world for end-users | |||

Other formats: There are older standards like OBJ or FBX (proprietary from Autodesk) which are widely used in 3D, but glTF is rapidly taking over as the modern standard for open exchange. VRM is a newer format specifically for humanoid avatars (built on glTF with extensions for things like bone animations and facial expressions), popular in the anime VTuber community. | |||

XR Device and Experience Standards: | |||

o OpenXR: Discussed earlier in Interfaces, OpenXR ensures a single code path to support multiple XR hardware. It's critical for reducing fragmentation in development; rather than writing separate code for Oculus vs. SteamVR vs. Windows Mixed Reality, a developer can target OpenXR and cover all. This standard is supported by almost all major players now (Valve's SteamVR, Meta's Quest, Microsoft's MR, etc. all have OpenXR support). | |||

o WebXR: The W3C standard API for accessing VR/AR in web browsers. It allows web developers to create immersive web pages that can, for instance, enter VR mode if a headset is connected, or show an AR view through a phone camera in-browser. As Web 4.0 pushes for openness, WebXR is an important piece to allow metaverse content via just a URL – no app install needed. WebXR in Chrome, Firefox, Edge uses OpenXR as the backend on PC , exemplifying how web standards and native standards connect. | |||

Networking and Interchange Standards: | |||

o WebRTC: As mentioned, it's the main standard for peer-to-peer real-time comms, used in web and native apps for voice, video, and data channels. It's IETF/W3C standard and very relevant for any social aspect of virtual worlds (for example, VRChat uses WebRTC for its voice chat). It ensures low-latency, direct or server-relayed communications without needing proprietary protocols. | |||

o Network Protocols: While not standard in the same sense, many engines use common libraries or patterns (like ENet or UDP with snapshot interpolation techniques) which are documented best practices. There's also WebSockets as a web standard for persistent connections, used by web-based virtual worlds to maintain state sync with a server. | |||

o File and Asset Standards: Apart from 3D models, standards like PNG/JPEG for images, OGG/MP3 for audio, etc., of course carry over into virtual worlds. One might also consider HTML/CSS as they could be used to design in-world user interfaces or dashboards (some engines allow embedding HTML surfaces in the 3D world). | |||

o Avatar and Identity Standards: This is an emerging area – projects like VRM (for avatars), and proposed standards for defining an avatar's appearance, attachments (like clothes as separate items), and animations that could be portable. The VRM consortium, for example, extended glTF to better handle anime-style avatars and their spring bone physics, etc. Also, Decentralised Identifiers (DIDs) (W3C standard) can be seen as part of identity data standards. | |||

Metaverse Content and Interaction Standards: Organisations and consortiums are working on broader standards: | |||

o The Metaverse Standards Forum (launched in 2022) is a consortium of tech companies and standards organisations (like Khronos, W3C, OpenAR Cloud, etc.) aiming to coordinate and advance interoperability standards for the metaverse. While it doesn't create standards itself, it helps harmonise efforts like glTF, USD, OpenXR, and identify gaps where new standards might be needed (such as maybe a standard for metauniverse scene description or economy interoperability). | |||

o X3D is an ISO standard, successor to VRML, for describing 3D scenes (including behaviors via scripts). It hasn't seen widespread use in modern engines (being more an academic/web standard), but it's an example of prior attempts to standardise virtual world formats. | |||

o OASIS CVRIA (just a hypothetical example, not sure if it exists) – the point is, various standards bodies like ISO, IEEE are likely to introduce guidelines (e.g. IEEE might release an XR ethics standard or an AR safety standard, etc., which, while not technical standards, influence development practices). | |||

{| class="wikitable" | |||

|+ Tools & Standards Summary | |||

|- | |||

! Tool/Standard !! Description & Role in Web 4.0 | |||

|- | |||

| Unity 3D Engine || • Widely used game engine for developing VR/AR experiences. Known for its cross-platform support (deploy to mobile, VR, consoles, web) and a gentle learning curve, making it popular for a broad developer base | |||

• Many metaverse apps and games are built on Unity. | |||

|- | |||

| Unreal Engine 5 || • High-end game engine known for cutting-edge graphics. Used when visual realism or large-scale worlds are needed. Supports XR (with strong OpenXR integration) and is favored for complex, high-fidelity virtual world projects | |||

|- | |||

| Blender || • Open-source 3D creation tool. Used to model and animate characters, objects, and environments that can then be imported into engines via standard formats. Empowers prosumers by being free and community driven. | |||

|- | |||

| ARKit / ARCore || • SDKs by Apple and Google for mobile AR development. They provide tools for plane detection, tracking, and rendering AR on iOS (ARKit) and Android (ARCore), enabling millions of smartphone users to experience AR content. Often used in Unity/Unreal via plugins. | |||

|- | |||

| OpenXR (Khronos) || • Open standard API for VR/AR device interfaces. Allows one application codebase to support many kinds of XR hardware (headsets, controllers) | |||

• . Reduces fragmentation and is widely adopted (supported by major XR platforms). | |||

|- | |||

| glTF (3D format) || • Open 3D asset exchange format – “the JPEG of 3D” – optimized for delivering 3D models in applications | |||

• Enables efficient transfer of models and scenes between tools and platforms in the metaverse. | |||

|- | |||

| WebXR (Web API) || • Web standard that lets web apps access VR/AR capabilities. With WebXR, a user can click a link and enter a VR experience in the browser – crucial for easy access to virtual worlds without app installs. Uses OpenXR on compatible browsers to interface with devices. | |||

|- | |||

| WebRTC (Protocol) || • Real-time communication protocol (peer-to-peer) standardised for the web but used beyond. In metaverse contexts, handles voice chat, video streaming (for e.g. virtual conferencing), or syncing interactive data streams among participants. Essential for live social interaction quality. | |||

|- | |||

| USD (3D scene format) || • Pixar's Universal Scene Description, used for composing complex 3D scenes with multiple assets. In metaverse development, used behind the scenes in content creation pipelines and high-fidelity simulations (e.g. NVIDIA Omniverse uses USD for multi-tool collaboration) | |||

• Works alongside glTF (USD for creation, glTF for delivery). | |||

|- | |||

| Decentraland SDK / Roblox Studio || • Examples of platform-specific dev tools enabling user-generated content. They include templates and languages (Roblox uses Lua) for scripting world logic. These tools foster prosumer participation by lowering the barrier to create interactive experiences on those platforms. | |||

|} | |||

====Emerging Trends in Tools & Devlopment==== | |||

While we are witnessing a growing emphasis on no-code or low-code creation tools in the metaverse, enabling non-programmers to design immersive experiences, there are concerns that such tools may limit the depth and complexity of the content that can be created. Additionally, while collaborative world-building where multiple individuals in virtual reality can collectively construct virtual environments in real-time is an emerging trend, it may also introduce challenges in maintaining consistency and coherence across the virtual landscape. | |||

From a standards perspective, the focus on avatar interoperability, inventory portability, and the continued evolution of 3D data formats is understandable, but there are concerns that these efforts may result in fragmentation and a lack of true cross-platform compatibility. The migration of engines towards cloud-based architectures, following the "cloud gaming" model, may also raise issues of accessibility and data privacy, as users may be required to rely on remote servers and face potential latency or connectivity issues. | |||

Finally, while the influence of open-source movements may shape the tool landscape and offer alternatives to proprietary engines, there are concerns that the metaverse may still be dominated by a few vendor-specific toolchains, as major technology companies may seek to maintain control over the ecosystem. | |||

==GOVERNANCE & COMPLIANCE (IPR, ETHICS, & POLICIES)== | |||

====Role in Virtual Worlds==== | |||

Compliance and governance in virtual worlds can be overly restrictive, stifling innovation and creativity. While legal, ethical, and regulatory frameworks are important, they should not be implemented in a heavy-handed manner that hinders the development of immersive digital experiences. The management of Intellectual Property Rights, for instance, can become a complex web of regulations that make it difficult for creators to freely build upon existing works. Additionally, the enforcement of data protection laws, while necessary, can lead to excessive privacy concerns that limit the collection of valuable user data needed to enhance virtual experiences. Safety regulations, if not carefully balanced, can restrict the sense of freedom and exploration that draws users to virtual worlds. Ultimately, the fundamental question of "what are the rules governing the virtual world, and how can they be effectively enforced?" must be answered with a light touch, allowing for the organic growth and evolution of these digital spaces. Compliance should serve as a tool to foster trust, not a barrier that stifles the innovative potential of Web 4.0. | |||

Key Components: | |||

• Intellectual Property Rights (IPR): In virtual worlds, intellectual creations such as 3D models, music, designs, and even avatar appearances may be protected by copyright or trademark laws. The management of Intellectual Property Rights in the metaverse is a complex matter: | |||

o Ownership of User-Generated Content: When a creator (or "prosumer") generates a virtual item or environment, the question of ownership arises. Often, platforms' terms of service grant them certain licenses over the user-generated content. A prominent trend in Web 4.0 is to provide creators with clearer ownership rights, potentially leveraging Non-Fungible Tokens as proof of ownership for unique digital items. Ensuring that creators can effectively enforce their rights remains a challenge. Tools may incorporate watermarks or utilise blockchain technology to track the provenance of virtual assets. Novel IPR frameworks may emerge, where a virtual asset is represented as an NFT that denotes the creator, and smart contracts manage resale royalties to the original creator. | |||

o Copyright and Trademark Enforcement: Similar to the web, virtual worlds will grapple with cases of infringement, such as users importing famous brands' logos or 3D models derived from copyrighted sources. Platforms will need to implement compliance processes, akin to DMCA-style takedown mechanisms, to remove infringing content. Regulatory bodies, such as the European Union, are already considering how existing laws apply in these virtual environments. For instance, if copyright piracy occurs within a virtual world, the existing copyright law should cover it, but the enforcement mechanisms require further clarification. | |||

o Licensing of Virtual Goods: There is also a need for standardised licences that enable creators to allow others to use their work under specific conditions. IPR compliance in virtual worlds also entails respecting the terms of open-source or licensed content that may be used within these digital environments. | |||

• Data Protection and Privacy Compliance: Compliance with data protection regulations, such as the EU's GDPR or California's CCPA, is essential for metaverse platforms that collect personal data. However, virtual worlds often gather even more sensitive information, including biometric data from VR headsets, such as iris scans, pupil dilation, heart rate, and voice analysis. Effective compliance in this context requires: | |||

o Obtaining informed consent from users for data collection | |||

o Enabling users to access and delete their data | |||

o Implementing robust data security measures to prevent breaches, which could have significant consequences given the sensitive nature of the collected information. | |||

o Not using data in exploitative ways – e.g. if a platform can measure emotional state via biometrics, ethical guidelines might be needed to forbid using that to manipulate the user (a potential dark pattern). | |||

• Age and Child Protection: When children participate in virtual worlds, compliance with regulations such as the Children's Online Privacy Protection Act is essential. This entails obtaining parental consent, limiting the collection of personal data, and implementing other safeguards for underage users. The design of virtual experiences may also need to adhere to age-rating systems or incorporate protective measures tailored to younger audience segments. | |||

o Safety Regulations: Virtual worlds must address potential risks to users' physical and mental well-being, with safety regulations encompassing measures to prevent harassment, hate speech, and other forms of harmful behaviour. | |||

• Content and Behaviour Moderation Policies: Ethically and often legally, platforms are required to moderate harmful content. The European Union's Digital Services Act mandates that platforms manage illegal content and be transparent about their content moderation practices. This regulation is likely to encompass metaverse platforms as well. Content moderation can be achieved through automated systems that detect inappropriate content. The goal is not only to identify policy violations but also to evaluate content within a specific context to determine whether the rules have been broken. | |||

• Ethical AI Use: When utilising AI in virtual worlds, there are important ethical considerations to address: | |||

o Mitigating bias in the AI systems employed | |||

• Ensuring transparency about whether users are interacting with AI-powered avatars or human-controlled ones, to set appropriate expectations | |||

o Implementing responsible practices for AI-generated content, preventing the creation of inappropriate or copyrighted material | |||

o Adhering to emerging regulatory frameworks, such as the EU's AI Act, which may impose requirements on "high-risk AI systems" used in metaverse applications, including documentation of training data and human oversight. | |||

o Research Ethics: Research conducted in virtual worlds introduces ethical considerations, especially for studies involving human subjects. Researchers should seek guidance from their Institutional Review Boards to navigate challenges regarding informed consent, privacy, and the potential for psychological harm to participants. | |||

• User Rights and Governance | |||

o Legal scholars are examining the potential legal challenges with the status of avatars, the jurisdictional authority of law enforcement in virtual spaces, and the mechanisms for dispute resolution regarding virtual interactions. There is ongoing debate about whether attacks on avatars should be legally considered assaults and proposals for establishing "avatar rights" or applying real-world laws to virtual actions, as these virtual interactions are often taken seriously. At the same time, some argue that virtual interactions should be treated differently from physical world actions and that new legal frameworks may be needed to address the unique nature of virtual worlds. | |||

o Virtual worlds may incorporate arbitration services or integrate with traditional legal systems to address serious disputes stemming from digital interactions. Compliance also entails adhering to relevant local laws, such as gambling or financial regulations, with virtual world providers potentially geo-fencing certain content or features to align with different jurisdictions' requirements. | |||

o Conversely, some virtual environments may leverage blockchain and decentralised autonomous organisations to enable user-driven governance. While this decentralised approach can be empowering, it still ultimately intersects with real-world legal frameworks. However, such decentralised governance models may be employed for community-based rule-making and moderation processes, which could provide more flexibility and user control compared to traditional legal systems. | |||

==FRAMEWORK – VIRTUAL WORLDS STACK PERSPECTIVE== | |||

While an overarching technological framework is essential to provide a comprehensive view of relevant dependencies between overlapping spaces of XR, AI, IoT and communication infrastructure, we identified a need for practical framework as a tool. | |||

===THE VIRTUAL WORLDS TECHNOLOGICAL FRAMEWORK TOOL=== | |||

FIGURE | |||

In the light of all the listed technologies and aspects of Virtual Worlds and Web 4.0, we now provide a targeted technological framework – The Virtual Worlds Technological Framework Tool (VWTFT), explicitly designed for small and medium enterprises (SMEs), startups, and businesses aiming to enter or thrive in the rapidly evolving Virtual Worlds space. | |||

In Figure 1 we present a contextual view of the tool focused on the key XR components important for Virtual Worlds while, for reference, also showing the dependencies on the external areas and technologies including AI, IoT, cloud and communication infrastucture discussed widely and in-detail in previous sections. | |||

In constructing our framework tool, we specifically looked at the technical architecture of the most popular immersive Virtual Worlds (VRChat, Spatial.io and ENGAGE) and analysed it from the perspective of different users: 1) Consumers, 2) Providers and 3) Prosumers. Then we classified components as technology or compliance-related and matched them with specific socio-technical areas such as 1) Tools, 2) Standards, 3) Data and Integration, 4) IPR, Ethics and 5) Immerson Interfaces (UX and devices). We elicit technological layers and provide particular instances of components. We identify external dependencies to related overlapping spaces and classify them under Infrastructure or Core Technologies. | |||

FIGURE | |||

In short, the framework focuses on the essential components of the technical stack relevant to creating and operating XR immersive environments, offering a practical and actionable tool to help SMEs navigate and understand the technological landscape. Therefore this partical approach, while contextualising the stack with external areas is limited to the space of XR and only the core XR view. By highlighting key technologies across engines, standards, data integration, intellectual property rights, ethics, and user experience considerations, this framework aims to simplify complex technological choices and address related implementation challenges, thus empowering SMEs to efficiently position themselves within the competitive and innovative Virtual Worlds market. | |||

We are now describing each of the layers in more detail with more elaboration upon specific example instances that are leveraged by the most popular Virtual Worlds. Since most of these technologies were discussed in the overarching framework sections we only provide brief references here to technologies explicity leveraged in the stack of the most popular VWs. That list limited purely to the Virtual Worlds and technologies selected from the core XR area is not exhaustive but the goal is to provide the reader with a basic notion of the leading technologies in the space for each of the layers. | |||

===Engines=== | |||

====Game Engines==== | |||

• Unity: Widely adopted due to its accessibility, robust documentation, large developer community, and flexible deployment across platforms. Ideal for SMEs due to its moderate learning curve, ease of asset integration, and extensive support for VR environments. | |||

• Unreal Engine: Known for high-fidelity graphics, realistic physics, and advanced rendering capabilities. While more complex, its Blueprints visual scripting tool offers powerful workflows for SMEs aiming for premium, photorealistic virtual environments. | |||

====Networking==== | |||

• Photon Engine: Provides scalable, real-time networking tailored for multiplayer Virtual Worlds. Its cloud-based infrastructure simplifies development, handling latency optimisation, synchronisation, matchmaking, and room management—crucial for SMEs building social or collaborative VR applications. | |||

===Standards=== | |||

Programming Languages: | |||

• C#: Dominant in Unity-based Virtual Worlds, offering simplicity, robustness, and excellent integration with existing software infrastructure. Ideal for SMEs because of its clarity, rapid prototyping capability, and extensive community support. | |||

• C++: Widely used by Unreal Engine, providing high-performance capabilities and fine-grained control over hardware resources. Ideal for SMEs targeting advanced graphical fidelity and optimised real-time interactions, though it requires greater technical expertise compared to C#. | |||

• Udon: VRChat's scripting language built on Unity and tailored specifically for social VR experiences. Ideal for SMEs seeking rapid development and deployment in community-driven platforms. | |||

Graphics and Rendering: | |||

• DirectX, OpenGL, Vulkan: These APIs are critical for optimised graphics rendering across devices. Vulkan, in particular, offers high-performance, cross-platform support, making it advantageous for SMEs aiming at multiplatform compatibility and performance optimisation. | |||

• Shaders: Fundamental in creating immersive visual effects and realistic materials in virtual environments. SMEs must balance visual fidelity and performance overhead, crucial for delivering smooth VR experiences. | |||

VR Integration: | |||

• OpenXR: Open standard simplifying XR hardware integration, ensuring platform independence, and reducing long-term technical debt—essential for SMEs needing flexible and future-proofed solutions. | |||

• SteamVR, Oculus SDK: Platform-specific SDKs offering optimised performance and native integrations. Important for SMEs targeting specific platforms or hardware ecosystems to achieve deeper functionality and user engagement. | |||

Audio: | |||

• Opus Codec: An efficient, low-latency audio codec optimised for real-time communication within virtual environments, critical for clear voice chat and immersive spatial audio. | |||

• Unity Audio Mixer: Enables real-time audio effects, spatialisation, and dynamic mixing essential for SMEs to deliver highly immersive and engaging user experiences without significant investment. | |||

===Data and Integration=== | |||

====Cloud & Backend Services==== | |||

• AWS, Azure, Databases: Provide scalable hosting, reliable data storage, user authentication, and analytics services. SMEs benefit from these services through reduced infrastructure costs, flexibility in scaling operations, and streamlined data management, ensuring robust backend support for immersive experiences. | |||

====Web & Social Integration:==== | |||

• REST APIs, WebRTC: Enable seamless integration of virtual worlds with external web services and real-time video/audio communications. SMEs utilising these standards can enhance social connectivity, integrate user-generated content, and build interactive collaborative experiences. | |||

===Intellectual Property Rights (IPR)=== | |||

====Content Distribution:==== | |||

• CDN (Content Delivery Networks): Ensure efficient global distribution of digital assets and smooth user experience. Crucial for SMEs aiming at international audiences. | |||

• NFT (Blockchain), Digital Wallet: Blockchain-based solutions provide SMEs with transparent and verifiable mechanisms for digital asset ownership and monetisation, critical for building new business models in virtual economies. | |||

====User-Generated Content Tools:==== | |||

• Unity Editor SDK, Blender, Maya, 3ds Max: Powerful tools enabling creators to produce high-quality 3D assets. SMEs leveraging these tools can foster creative ecosystems, empowering prosumers (producer-consumers) and reducing costs by integrating community-generated content effectively. | |||

====Authentication & Security:==== | |||

• OAuth/Auth System, Anti-Cheat Systems: Essential for protecting user identity, securing transactions, and maintaining fairness within virtual environments. SMEs implementing robust security measures can enhance user trust, reduce liability, and comply with privacy regulations. | |||

===Ethics=== | |||

====Analytics & Monitoring:==== | |||

• Telemetry & Logging, Crash Reporting: Tools gathering extensive user behavior data, system performance insights, and usage patterns. While critical for SMEs to maintain high-quality user experience and service reliability, these technologies inherently raise significant ethical concerns around data privacy, user consent, transparency, and potential misuse of data. | |||

• AI Moderation & Analytics Voice-to-Text (e.g., OpenAI): Automated systems can identify problematic interactions, harassment, or misuse in virtual environments. SMEs must navigate the ethical balance between effective moderation, user privacy, freedom of expression, and biases inherent in AI models. Clear ethical frameworks, transparency of data usage, and adherence to standards such as GDPR become essential considerations. | |||

====NPC & AI Automation:==== | |||

• Virtual Assistants & Trainers (OpenAI): Enhance user engagement and immersion by providing realistic, context-aware interactions. Ethical concerns for SMEs include managing bias, ensuring transparency of AI decisions (Explainable AI), avoiding manipulation or deception of users, and clearly distinguishing between human and AI interactions to maintain user trust. | |||

===Devices, UI & UX=== | |||

====XR Interfaces:==== | |||

• Meta, Apple Vision Pro, Varjo, Pico, Pimax, WebXR: SMEs must select VR hardware carefully, considering factors like user demographics, performance requirements, budget constraints, and platform ecosystems. Meta's Quest offers widespread adoption and ease of development, whereas Apple, Varjo and Pimax target premium markets with high visual fidelity and professional features. WebXR is crucial for SMEs seeking maximum accessibility through browser-based immersive experiences without device limitations. | |||

User Interface (UI) and User Experience (UX) considerations are critical for SMEs, affecting user retention and overall success. Optimising ergonomics, accessibility, intuitive navigation, and responsiveness directly impacts user satisfaction, making informed hardware and software choices critical. | |||

==STAKEHOLDER PERSPECTIVE VIEW== | |||

A central strength of the proposed technological framework for Virtual Worlds and Web 4.0 lies in its ability to accommodate and reflect the varying needs, roles, and priorities of key stakeholder groups—consumers, producers, and prosumers—each of whom contributes uniquely to the evolution of immersive digital ecosystems. Recognising these perspectives is essential for designing technology that is not only functionally advanced, but also inclusive, ethical, and socially grounded. | |||